GPT-5.5 arrives with a strange kind of pressure: it has to beat Claude Opus 4.7 in public, answer the Mythos hype in private, and prove that smarter AI can still make economic sense.

That is a lot for one model to carry.

But this is also why GPT-5.5 feels important. It is not being launched into a quiet market. It is entering an AI race where every new model is judged in three different ways at once: Is it smarter? Is it useful? And is it worth the money?

For normal users, that last question may be the most important one. A model can win benchmarks all day, but if it feels slow, expensive, robotic, or difficult to use, the excitement fades quickly. GPT-5.5 seems designed to avoid that problem. OpenAI is not only presenting it as a more intelligent model, but as a model built for real work: coding, research, data analysis, documents, spreadsheets, tool use, and longer tasks that need more than one clean answer. OpenAI describes GPT-5.5 as better at understanding tasks earlier, needing less guidance, using tools more effectively, and continuing through work with stronger self-checking.

GPT-5.5 is trying to be a better worker.

The AI Race Has Moved Beyond “Who Is Smarter?”

For a long time, AI model launches had a familiar rhythm. A company released a model, posted benchmark charts, showed coding examples, and everyone argued about whether it was better than the last one.

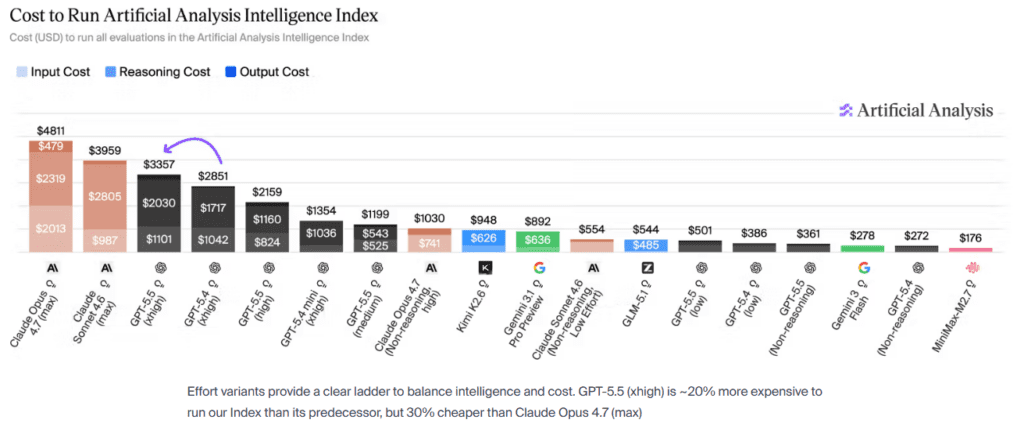

That still matters. GPT-5.5 is already being described as a major jump, with Artificial Analysis placing it at the top of its Intelligence Index and saying it gives OpenAI a clear lead again.

But the more interesting part is not only raw intelligence. The real question now is whether the model can carry a task from beginning to end.

- Can it plan?

- Can it use tools?

- Can it check its own work?

- Can it write code, notice bugs, fix them, and keep going?

- Can it help a normal person finish something useful without turning the whole process into a long fight?

This is where GPT-5.5 seems to matter most. It is being described less like a “question-answering” machine and more like an agentic system. That means it is built for workflows, not just replies.

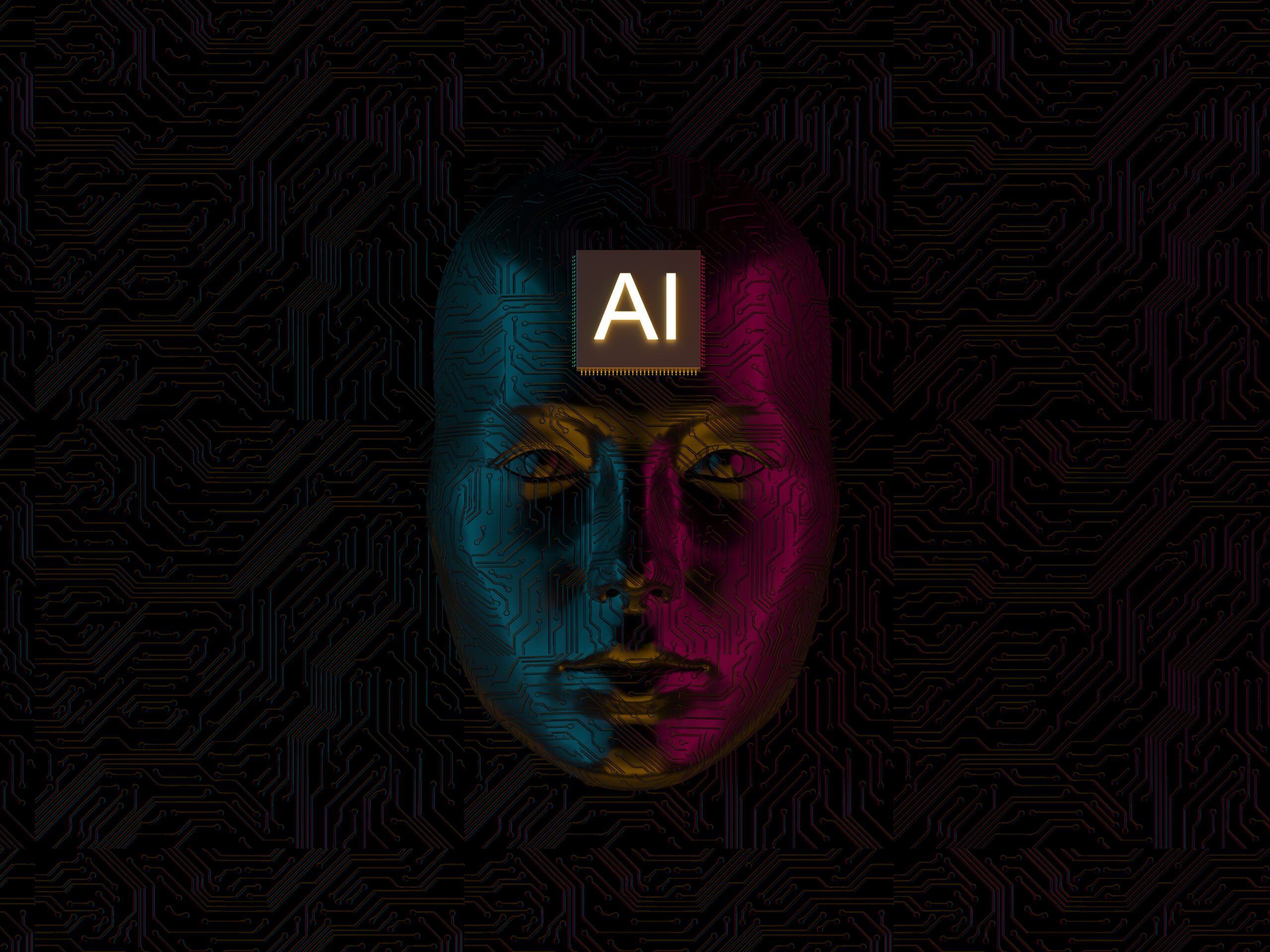

GPT-5.5 vs Claude Opus 4.7: The Public Model Fight

The clearest comparison is GPT-5.5 vs Claude Opus 4.7.

In our earlier Millionero coverage of Claude Opus 4.7 and the hype around Mythos, the main point was that Anthropic’s story had two layers. Opus 4.7 was the powerful public model. Mythos was the more mysterious model sitting behind it, surrounded by stronger claims, stronger restrictions, and stronger curiosity.

GPT-5.5 now pushes directly into that same territory.

Claude Opus 4.7 is still a serious model. Anthropic presents it as its most capable generally available model, especially for coding, agentic work, and knowledge-heavy tasks. But GPT-5.5 seems to be OpenAI’s attempt to take back the public lead, especially in the areas that matter most for everyday professional use.

That does not mean Opus 4.7 suddenly becomes irrelevant. It means the comparison changes.

Before GPT-5.5, the question was: Can Opus 4.7 hold the frontier while Anthropic keeps Mythos restricted?

Now the question is: If GPT-5.5 is publicly available and stronger across common work tasks, how much does Mythos’ restricted power matter to normal users?

That is the pressure GPT-5.5 creates.

Mythos Is Still the Shadow in the Room

The Mythos comparison is more complicated.

Some early commentary framed GPT-5.5 as being close to Mythos level. Some hype accounts went even further, treating it as a direct Mythos rival. But the more careful view is that GPT-5.5 may be better for most users, while Mythos may still be stronger in certain specialized areas, especially cyber-related tasks.

Anthropic itself has been careful around this topic. In its Claude Opus 4.7 release, the company said it added safeguards to detect and block prohibited or high-risk cybersecurity uses, and that real-world deployment of those safeguards would help it move toward broader release of Mythos-class models.

That tells us something important. Mythos is not just another chatbot upgrade. It is being treated as a model class with higher-risk capabilities.

So the balanced comparison looks like this: GPT-5.5 is probably the stronger public product right now. Mythos may still be stronger in narrow, restricted, high-risk domains.

For most people, that is not a small distinction. A model that is powerful but unavailable is less useful than a model that is powerful, accessible, and integrated into tools people already use.

The Efficiency Question: Better, But at What Cost?

This is where GPT-5.5 needs to prove itself.

The launch is not only about capability. It is also about economics. OpenAI says GPT-5.5 keeps strong latency while improving performance and using fewer tokens in Codex tasks. Reports around the release also highlight its improvements in coding, debugging, research, productivity, and multi-step work.

That sounds great, but it raises a real question: does fewer tokens per task actually make the model cheaper to use, or does the higher model cost cancel that out?

This is where the debate becomes practical.

For a casual user, the answer may not matter much. If GPT-5.5 gives better answers, writes cleaner drafts, and helps finish work faster, that is enough.

For developers and companies, the math is different. They care about the full cost of a finished task. That includes prompt tokens, output tokens, tool calls, failed attempts, human correction, and time wasted fixing errors. A model that looks expensive at first can still be cheaper if it finishes the job with fewer retries.

So GPT-5.5 may not be “cheap” in the simple sense. But it may be more efficient in the only sense that matters: the cost of getting real work done.

The Image Model Battle: OpenAI vs Nano Banana

GPT-5.5 is not the only piece of OpenAI’s wider push. The image side matters too.

OpenAI’s latest image generation model, ChatGPT Images 2.0, is another sign that the company is thinking in workflows. It is not only about making a nice picture from a prompt. OpenAI highlights better text rendering, multilingual support, stronger style control, and use cases across photography, illustration, manga, posters, editorial design, and print-ready visuals.

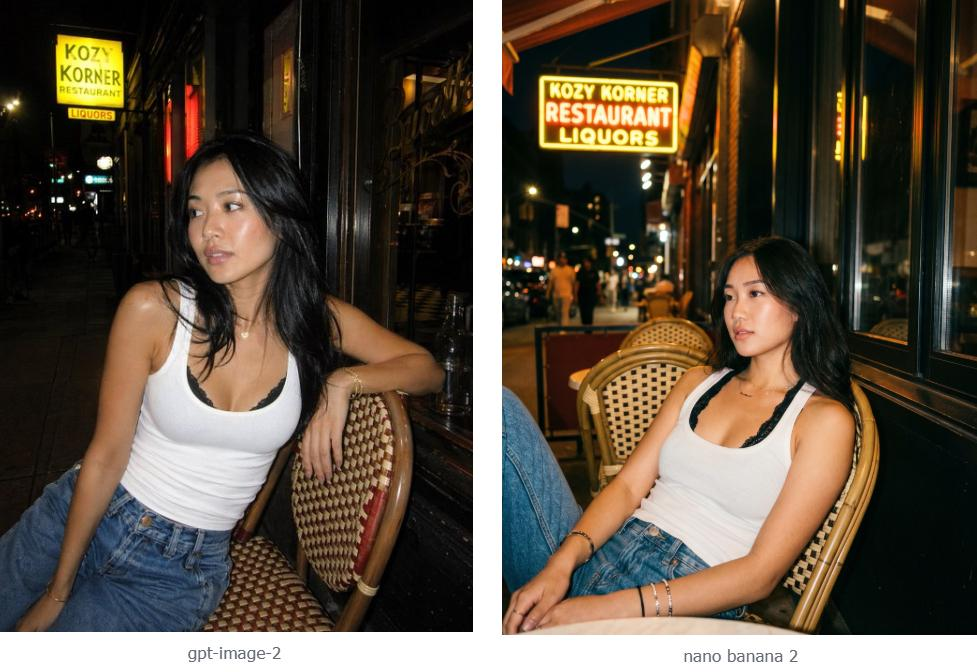

That puts it naturally against Google’s Nano Banana image model line, which has been praised for fast, flexible image creation and editing.

Source: Reddit post. Check for original prompt

The difference is in the direction.

Nano Banana feels more like a fast creative image tool. It is good for quick edits, visual experiments, and turning prompts into polished images with less friction.

ChatGPT Images 2.0 feels more like part of a larger creative assistant. It can sit inside a conversation where the user is also planning, writing, comparing, editing, and building a full asset. That matters because many people do not only want “an image.” They want a thumbnail, a poster, a blog graphic, a product mockup, or a visual idea that matches a larger piece of work.

That is where OpenAI’s advantage becomes clearer. The image model is not standing alone. It is connected to the same assistant that can write the caption, explain the concept, revise the layout, and help shape the final output.

The Simple Takeaway

GPT-5.5 is better. But the more interesting point is how it is better.

It does not feel like OpenAI only wanted to win another benchmark chart. It feels like OpenAI wanted to make a model that can sit inside real work and carry more of the weight.

Against Claude Opus 4.7, GPT-5.5 looks like the stronger public release. Against Mythos, the answer is more careful: Mythos may still be more powerful in certain restricted technical areas, but GPT-5.5 is the model most people can actually use.

And with ChatGPT Images 2.0, OpenAI is pushing the same idea into visuals. The goal is not just smarter text or prettier images. The goal is a system that helps users move from idea to finished work with fewer steps in between.

That is the real story behind GPT-5.5. The AI race is no longer only about intelligence. It is about usefulness, access, efficiency, and trust.

As always, this article is for informational purposes only and should not be taken as financial advice. Readers should do their own research, follow new AI and market developments carefully, and explore more updates on the Millionero blog. When ready, users can also trade spot and perpetual markets on Millionero.