Anthropic has released Claude Opus 4.7, calling it a step up from Opus 4.6 in coding, image understanding, and instruction-following. On paper, that is the clean headline. But the bigger story may be what sits behind it: Claude Mythos, the unreleased model Anthropic says is even stronger, especially in cybersecurity.

That has turned this launch into more than a product update. It is now a story about AI competition, government tension, security claims, and timing.

What Anthropic says about Opus 4.7

Anthropic says Opus 4.7 improves on Opus 4.6 in the tasks many developers care about most.

Where Opus 4.7 is meant to be better

According to Anthropic, Opus 4.7 is better at:

- hard coding work

- long tasks with many steps

- following instructions more closely

- working with higher-resolution images

The company also says it kept the same pricing as Opus 4.6, which makes the upgrade easier to sell.

Opus 4.7 looks like a more polished public model. It is built to feel more reliable, not just more powerful.

But Anthropic itself says Mythos is stronger

This is where the story gets interesting.

Anthropic has openly said Mythos Preview is more powerful than Opus 4.7 on the cybersecurity side. In fact, Anthropic says Opus 4.7 does not even push its capability frontier, because Mythos scores higher on the relevant tests.

Why Mythos is getting so much attention

Anthropic says Mythos found high-severity software flaws across major operating systems and browsers. It then launched Project Glasswing, a group effort with major companies like Amazon, Apple, Cisco, Google, Microsoft, NVIDIA, and JPMorganChase to fix problems first and widen access later.

That sounds dramatic, and that is exactly why it is working as a narrative.

Anthropic is telling the market: we built something so strong we cannot release it widely yet. That is a powerful message, because it creates both fear and prestige at the same time.

The problem with the Mythos story

Here is the pushback.

Saying Mythos found a lot of vulnerabilities does not automatically mean Anthropic alone has reached some impossible new level. The wider security world has already been saying that AI models in general have become much better at finding bugs this year.

NPR recently reported that maintainers of major software projects are seeing AI-generated bug reports become far more useful. OpenAI has also been pushing its own cyber work, saying its models are improving fast in defensive security tasks, and it has launched programs like Aardvark and GPT-5.4-Cyber.

Why this may be more marketing than miracle

That does not mean Mythos is fake. It means the framing may be too convenient.

Anthropic is presenting Mythos like a near-mythical machine that found huge numbers of flaws, while also limiting outside visibility into what exactly was found, what was patched, and what other models could have caught too.

So the fair argument is this: Mythos may be strong, but the idea that only Mythos can do this looks overstated.

Other frontier models can also help find vulnerabilities. Anthropic may simply be the one doing the loudest packaging around that fact right now.

The Pentagon fight adds more tension

Anthropic is also dealing with a public clash with the U.S. defense world.

The dispute grew after Anthropic pushed back on the use of its technology for autonomous weapons and mass surveillance. In response, the Pentagon labeled the company a supply chain risk, and that turned into a larger fight over whether the government should work with Anthropic at all.

Why this matters now

This makes the Mythos timing even more awkward.

On one side, Anthropic is saying its strongest cyber model can help secure critical systems. On the other, it is fighting with parts of the U.S. government over trust, access, and military use.

That contradiction gives the whole rollout a strange feel. Anthropic wants to sound like the adult in the room, but it is also in the middle of a very public power struggle.

OpenClaw shows a different kind of Anthropic problem

Anthropic has also upset developers by cutting off normal Claude subscription access for third-party tools like OpenClaw, pushing users toward extra paid usage or API billing.

That may look like a business choice, but it also created frustration in the developer world.

And this is where OpenAI keeps slipping in

This is becoming a pattern.

When Anthropic creates friction, OpenAI often gets the opening.

After the OpenClaw pricing and access mess, OpenAI looked friendlier to that crowd. After the Mythos cybersecurity splash, OpenAI quickly pushed its own cyber message harder. Anthropic builds the drama, and OpenAI often arrives right after with a smoother commercial pitch.

That does not mean OpenAI wins every round. But it does mean Anthropic keeps leaving gaps that rivals can use.

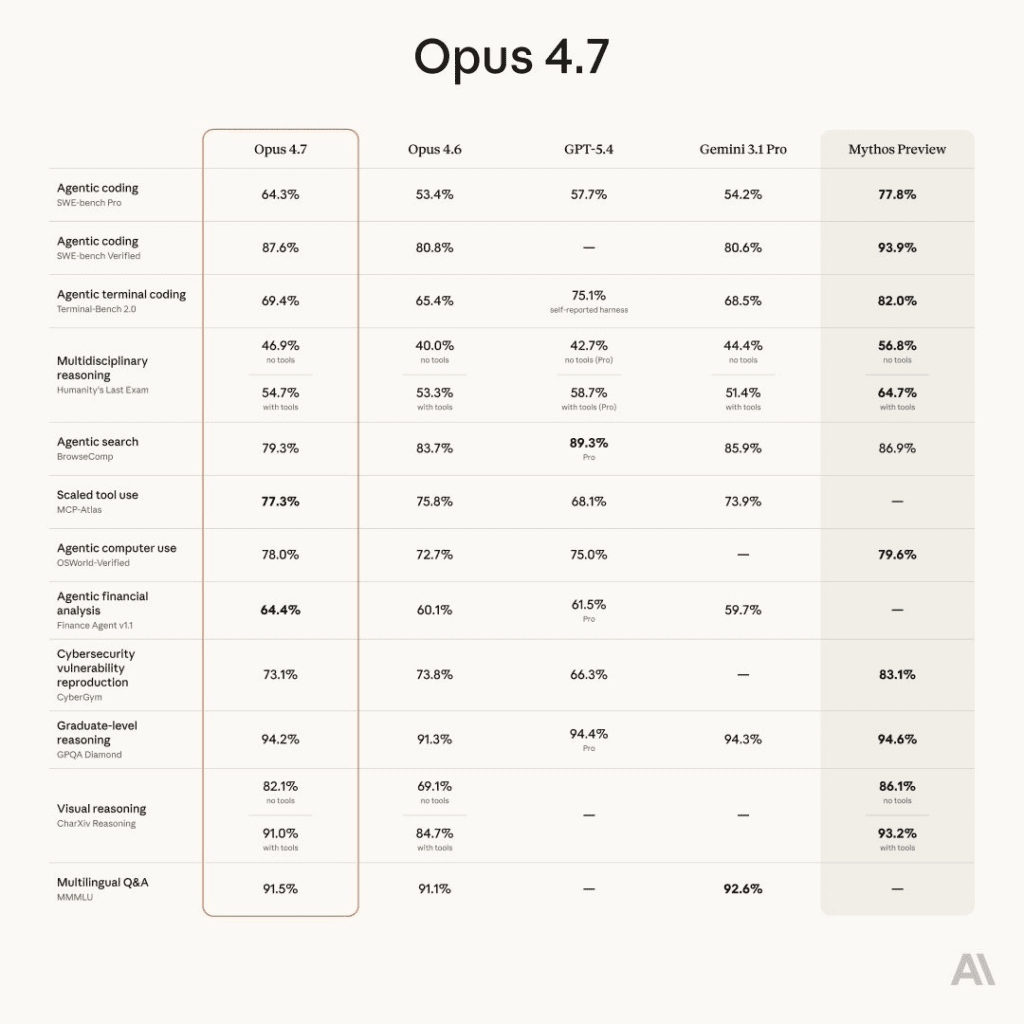

How Opus 4.7 compares with Opus 4.6, GPT-5.4, and Mythos

Opus 4.7 vs Opus 4.6

Opus 4.7 looks like a practical upgrade. It is meant to be more steady, better at difficult coding work, and easier to trust on longer tasks.

Opus 4.7 vs Mythos

Mythos is the stronger and more mysterious model, but it is also the one most users cannot access. That limits its real market value, at least for now.

Opus 4.7 vs GPT-5.4

GPT-5.4 still matters because OpenAI is pushing hard on both coding and cyber defense. So even if Anthropic has the more dramatic security story this week, OpenAI is close enough that the gap may be more about positioning than total capability.

Final take

Claude Opus 4.7 looks like a real upgrade. But the bigger headline is Anthropic’s attempt to turn Mythos into both a warning and a badge of superiority.

That may help Anthropic win attention, but it also feels like a heavy marketing play wrapped around a real technical step.

The core claim that matters is not whether Mythos found bugs. It probably did. The real question is whether that ability is truly unique.

Right now, the answer looks simple: probably not.

This article is not financial advice. Always do your own research. You can also do your research on our blog, and when you’re ready, come trade spot and perps on Millionero.